The teacher evaluations recently posted on the Los AngelesTimes Web site deserve acareful but skeptical reading.

I studied them both as a grandparent of public school kids and as a journalist who writes about schools for The Jewish Journal and LA Observed, a Web site that focuses on local politics, government and the media. I also know how important the public schools are to Jewish families concerned about their children’s education.

Like many other people, my attention was caught by the premise behind the series of stories in the Times and on its Web site — the promise of a new, trailblazing way of evaluating teachers. The Times has posted the names and evaluations of about 6,000 elementary school teachers on latimes.com. On the first day that the series ran in the print edition of the newspaper, two teachers were singled out, one shown in a large picture, as among the least effective in the Los Angeles Unified School District (LAUSD).

I was offended by the way the two teachers were singled out and the fact that thousands more names and ratings have been posted on the Web site. Even in their weakened states, newspapers, especially one as big as the Times, are powerful instruments. I began to dig into the evaluation system.

The Times evaluations are based on the value-added system. As the Times explained it, “In essence, a student’s past performance on tests is used to project his or her future results. The difference between the prediction and the student’s actual performance after the year is the ‘value’ that the teacher added or subtracted. … If a third-grade student ranked in the 60th percentile among all district third-graders, he would be expected to rank similarly in the fourth grade.” If that student’s test scores fell, it would show his teacher was ineffective. If they rose, the teacher would be classified as effective.

A strong caution on the value-added system came from Mathematica Policy Research, an organization working for the U.S. Department of Education that is headed by Education Secretary Arne Duncan, a supporter of value added. Mathematica’s analysis offered “evidence that value-added estimates for teacher-level analyses are subject to a considerable degree of random error.”

When I asked Times Assistant Managing Editor David Lauter about this, he told me, “We took several steps to deal with the inherent error rate that is involved in any statistical measure.”

In the Times evaluation system, the possibility of error is expressed as confidence levels, in terms of plus and minus. The margin of error for English scores is plus or minus five for the highest and lowest scorers. For math, it is plus or minus seven. Accuracy is even lower for teachers who score midrange.

To see how this worked, I looked up a teacher I know to be outstanding. The teacher was rated “more effective,” just short of being rated most effective, in English and on his overall score. His highest ratings were in math.

Then I learned something interesting. He did not teach his class math. This school used team teaching. He taught English and other subjects. But when it came time for math, the students went to the classroom of a teacher who specialized in math instruction. I thought this would seriously skew test results. I asked LAUSD officials about it.

One official said some, but not a majority, of Los Angeles schools engage in team teaching. It’s up to the principals and the teachers. At the beginning of the school year, the students in a class are assigned to a particular teacher. We’ll call him or her the “official teacher.” In elementary school, this teacher is responsible for teaching all subjects to the students assigned him or her. But in a team teaching school, the students may go to the math teacher’s classroom for instruction. Yet the “official teacher” administers all the tests and takes the credit or blame for students’ performance on all subjects. “They will get credit for teaching math, even though they didn’t teach math,” one official told me.

This seems to be a huge flaw in the teacher rating system. There are others. Statistical analysis involves random sampling. Random sampling is important in such analysis. But a study by the Economic Policy Institute said test results usually do not come from classes where students were enrolled at random or by chance. Instead, classroom assignments are made by principals based on such factors as spreading high and low achievers among classrooms, separating troublemaking friends and yielding to parental pressure.

Lauter told me the Times analysis took these factors into account and produced “unbiased results.”

That’s for the reader to determine. Test scores and the value-added system are a useful tool in evaluating teachers. But they are imperfect and fall short of telling the whole story.

The Times should have done a better job of revealing flaws in its system. When parents look up a teacher on the database, they should know the ratings have a substantial error rate and, at best, are a limited measure of a teacher’s ability.

As the old saying goes, don’t believe everything you read in the newspapers.

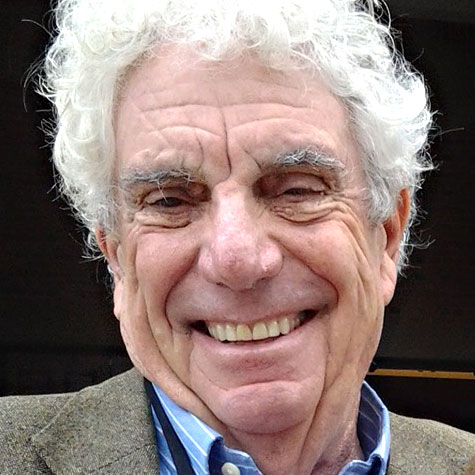

Bill Boyarsky is a columnist for The Jewish Journal, Truthdig and L.A. Observed, and the author of “Inventing L.A.: The Chandlers and Their Times” (Angel City Press).

More news and opinions than at a Shabbat dinner, right in your inbox.

More news and opinions than at a Shabbat dinner, right in your inbox.